AS Computing - Unit 1

Sound

What Is Sound?

'a mechanical disturbance from a state of equilibrium that propagates through an elastic material medium.'

Encylopaedia Britannica

'an air pressure wave'

A Computing Text Book

In an analogue system, sound is captured by a transducer (perhaps a microphone) that produces an electrical signal that varies in proportion to the pressure created by the sound. That electrical signal can be transmitted or stored on a suitable medium (eg magnetic tape).

For the sound to be heard, the electrical signal must be used to recreate the original sound by vibrating a mechanical surface in a speaker. The term fidelity refers to the precision with which the original sound wave is recreated.

Vinyl

On vinyl LPs, sound was encoded into the shape of a spiral groove that ran across the surface of the record. To play the record, a fine needle followed the tiny changes in this groove, reading the changes in sound.

Playing vinyl produced a warm, rich sound that you don't get with a CD. Vinyl was no less reliable than CD. Album covers were much more impressive and the music lover's experience was richer for it.

As with many changes in format, the public were seriously ripped off when CDs were introduced.

Analogue Data

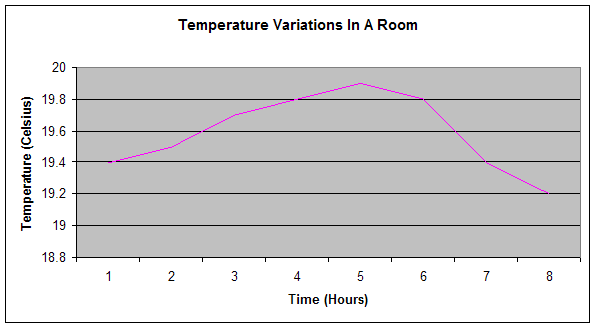

Physical quantities such as temperature and pressure vary continuously over time.

Digital Data

Digital data is discontinuous. It varies in discrete steps. Imagine that the temperature shown in the graph is measured at regular intervals and recorded as a series of discrete values. That is digital.

Data & Signals

An analogue signal is an electrical signal that varies continuously over time. A digital signal is an electrical signal that changes in discrete steps.

An Analogue To Digital Converter samples sound at regular intervals and records each value as a digital value.

Pulse Code Modulation

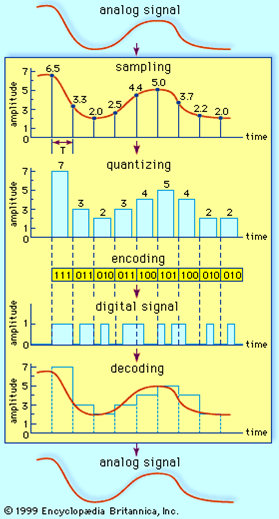

PCM is a process for coding sampled analogue signals by recording the height of each sample in a binary electrical equivalent.

A-D Conversion

Samples are taken of the analogue signal at fixed and regular intervals of time. The samples are represented as narrow voltage pulses, proportional in height to the original signal. This is called Pulse Amplitude Modulation (PAM)

PCM data is produced by quantising the PAM samples. That means that the height of each sample is approximated using an integer value of n bits.

The height of each PCM pulse is encoded using n bits to produce the output in binary signal form.

D-A Conversion

In order to play the digital sound, the process of conversion is reversed. The DAC produces a signal which is an approximation of the original signal.

The entire encoding and decoding process is represented in the diagram.

The Maths Of Sampling

An analogue frequency of 1000Hz is converted to a PCM signal by sampling at a frequency of 2000Hz (2000 samples per second). Each sample is encoded in 8 bits using PCM coding.

How many bytes of storage are required to encode 10 seconds of the analogue signal?

2000 samples taken each second.

10 x 2000 = 20 000 samples.

1 byte (8 bits) for each sample.

20 000 x 1 byte = 20 000 bytes.

Sampled Sound

Sampling Rate = The number of samples taken each second.

Measured in Hz or KHz.

Sampling Resolution = The number of bits used to store each sample.

Measured in Kbps

How Often To Sample

Nyquist's theorem states that we must sample at twice the frequency of the highest signal in order not to miss meaningful changes in the original signal.

The bandwidth of an analogue signal is the difference between the highest frequency and the lowest frequency of the signal. This is the maximum frequency range of the signal. The Nyquist interval is one over twice the bandwidth.

For a signal with a frequency range of 3000Hz, sampling intervals should be no more than 1/6000 seconds apart.

Sampling Rate

The higher the rate, the more often samples are taken, the more accurate the representation of the sound.

Sampling Resolution

A more accurate representation of the analogue signal can be achieved if more bits are used to store each sample.

File Formats

WAV is a very common format for storing digitised sound. The WAV format allows variation of frequency and resolution. File sizes are relatively large and fidelity is good.

Compressed Audio

MPEG audio files come in a variety of flavours and can carry extensions such as .mp2, .mpa, .mp3, .mp4.

The compression algorithms used to produce the files are based on psychoacoustic modelling - removing frequencies that the brain and ear will not miss.

File sizes are substantially reduced - some loss of quality can occur.

Compressed Speech

Data compression techniques are also used for encoding speech. These are different techniques to those used for compressing music.

Sound Mixing

Mixing sounds from different sources into a single file can be fun. Digital encoding of audio information makes this even easier than it was in the way back.

Sound Synthesis

Approximating real-world sounds using electrical equipment. Things like pianos aren't too bad. Some instruments are harder to emulate. MIDI (Music Information Digital Interface) stores no sound data, simply notes, duration and instruments. Very compact.

Streaming Audio

An audio streaming client (say, RealPlayer) starts receiving audio data from a remote location. This data is stored in a buffer. Once there are a few seconds of data in the buffer, the client begins playback from the buffer. As long as the buffer does not run out of data, the sound will play without pause.

Sound Editing

Representing sound in digital form allows for editing. This might mean removing background noise or specific frequencies. It might mean cropping or merging with other sounds.